Building a Robot’s Eyes

DIDEN Robotics builds virtual industrial environments and trains perception AI models inside them. From NVIDIA Isaac Sim-based visual data collection to a proprietary training framework — how DIDEN Robotics develops a robot’s visual intelligence.

Building an autonomous robot requires three core capabilities: perception to understand the surrounding environment, state estimation to track the robot’s own position and orientation, and locomotion control to execute movement. DIDEN Robotics develops all three in-house. This series examines each one. First up: perception — the robot’s eyes.

Without Eyes, a Robot Cannot Take a Single Step

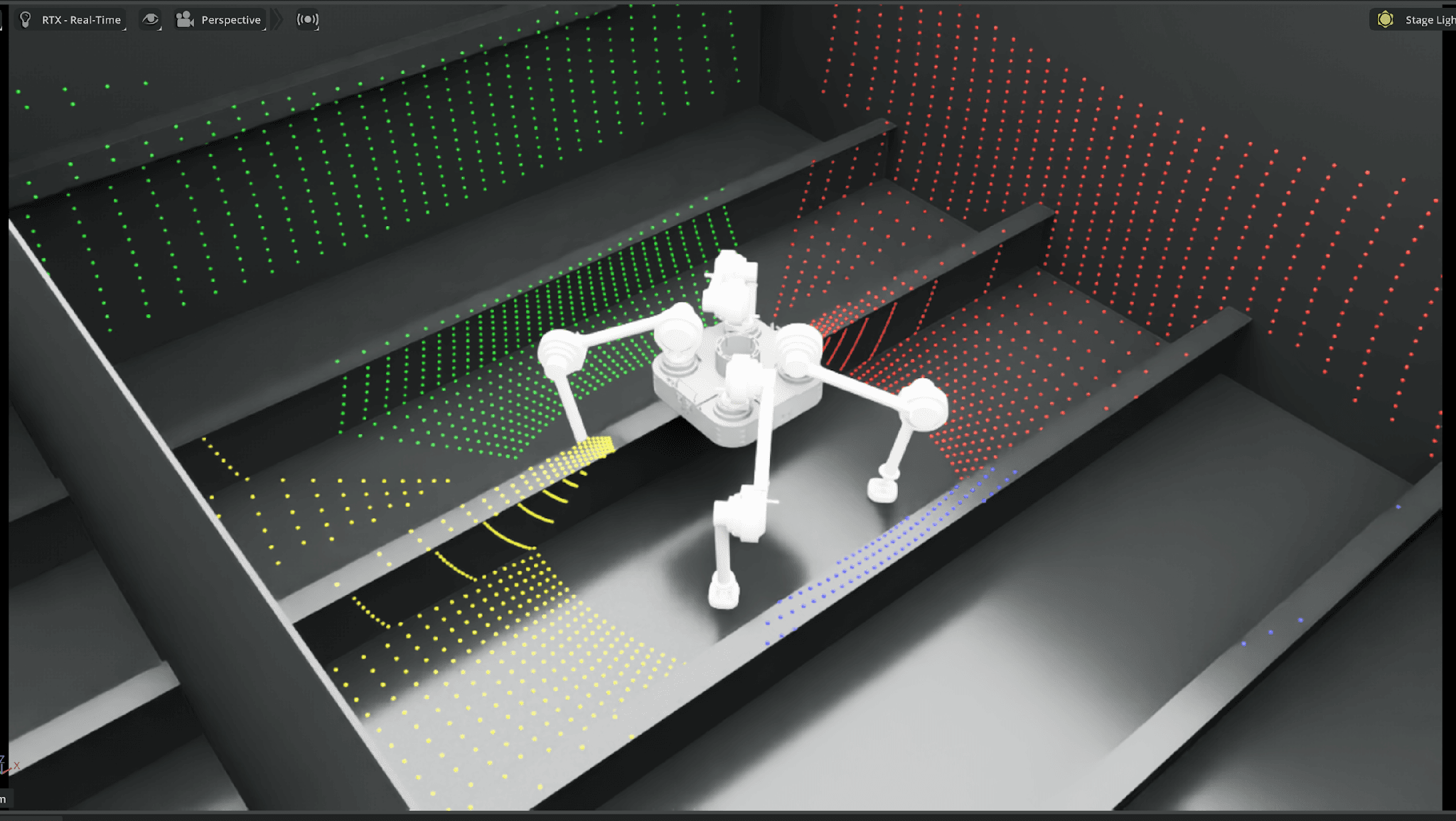

Inside NVIDIA Isaac Sim, a virtual industrial environment comes into view. Bulkheads and stiffeners repeat across a shipyard block structure, with access holes spaced at intervals. A DIDEN Robotics robot walks across the surface. This is not a real shipyard. It is a virtual environment built in code, modeled after the structural patterns of real shipyard assembly blocks.

A robot collecting point cloud data in a virtual environment on NVIDIA Isaac Sim

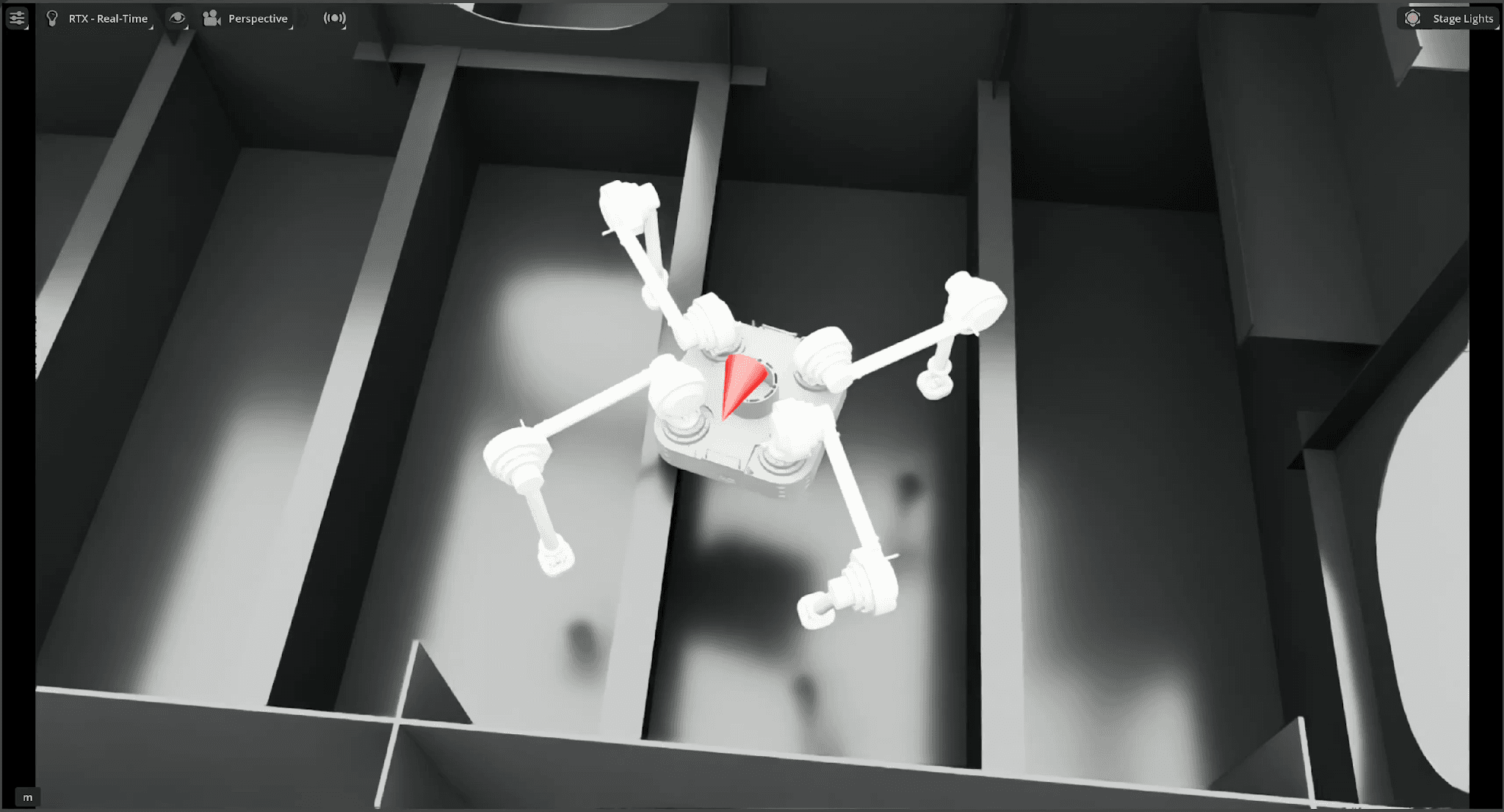

Before a robot can take its next step, it must know what lies ahead. Where the obstacles are, what the surface looks like, how tall the structures are. The AI that converts depth camera data into a 3D map of the surroundings is the robot’s eyes.

What the robot’s depth camera sees. Brightness encodes distance information

Training this AI requires massive amounts of data — camera images paired with the actual 3D geometry at each location, thousands to tens of thousands of pairs. Collecting this in real industrial environments is impractical. Production cannot be halted, many areas are inaccessible, and every site has different geometry.

So DIDEN Robotics generates data in virtual environments. And at the center of those virtual environments is a simulation pipeline built in-house.

Simulation Pipeline: From Isaac Sim to a Proprietary Framework

DIDEN Robotics’ perception development uses NVIDIA Isaac Sim as a visual data collection tool, and performs training in a proprietary framework developed by the team.

Virtual environments are built on NVIDIA Isaac Sim. DIDEN Robotics loads its proprietary robot model, places auto-generated terrain, and attaches virtual depth cameras matching real hardware specifications.

Isaac Sim’s role is to collect visual data within these virtual environments. As the robot moves, images from the depth camera and the corresponding 3D structural information at each location are recorded as paired data.

Training on the collected data takes place in a framework developed in-house by DIDEN Robotics.

Visual data is generated in Isaac Sim; training happens in a proprietary framework. This combination of external platform and in-house technology forms the foundation of DIDEN Robotics’ perception development.

For reference, robot control tasks beyond perception — such as locomotion and upper-body control — use the MuJoCo Warp simulator for data acquisition, with the control learning pipeline also built and maintained in-house.

Generating Virtual Environments Automatically

If NVIDIA provides the simulation infrastructure, the actual construction of virtual environments is carried out by DIDEN Robotics.

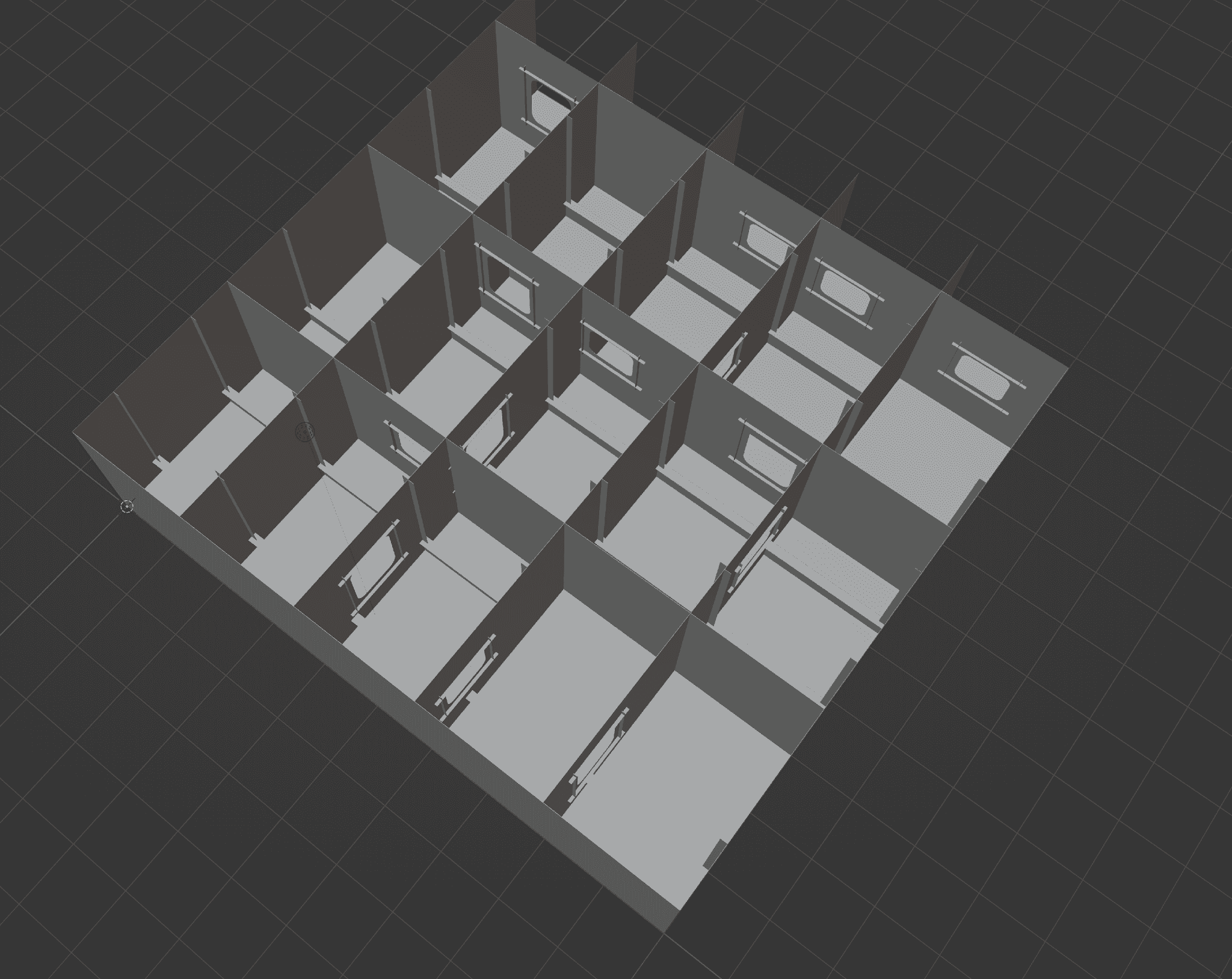

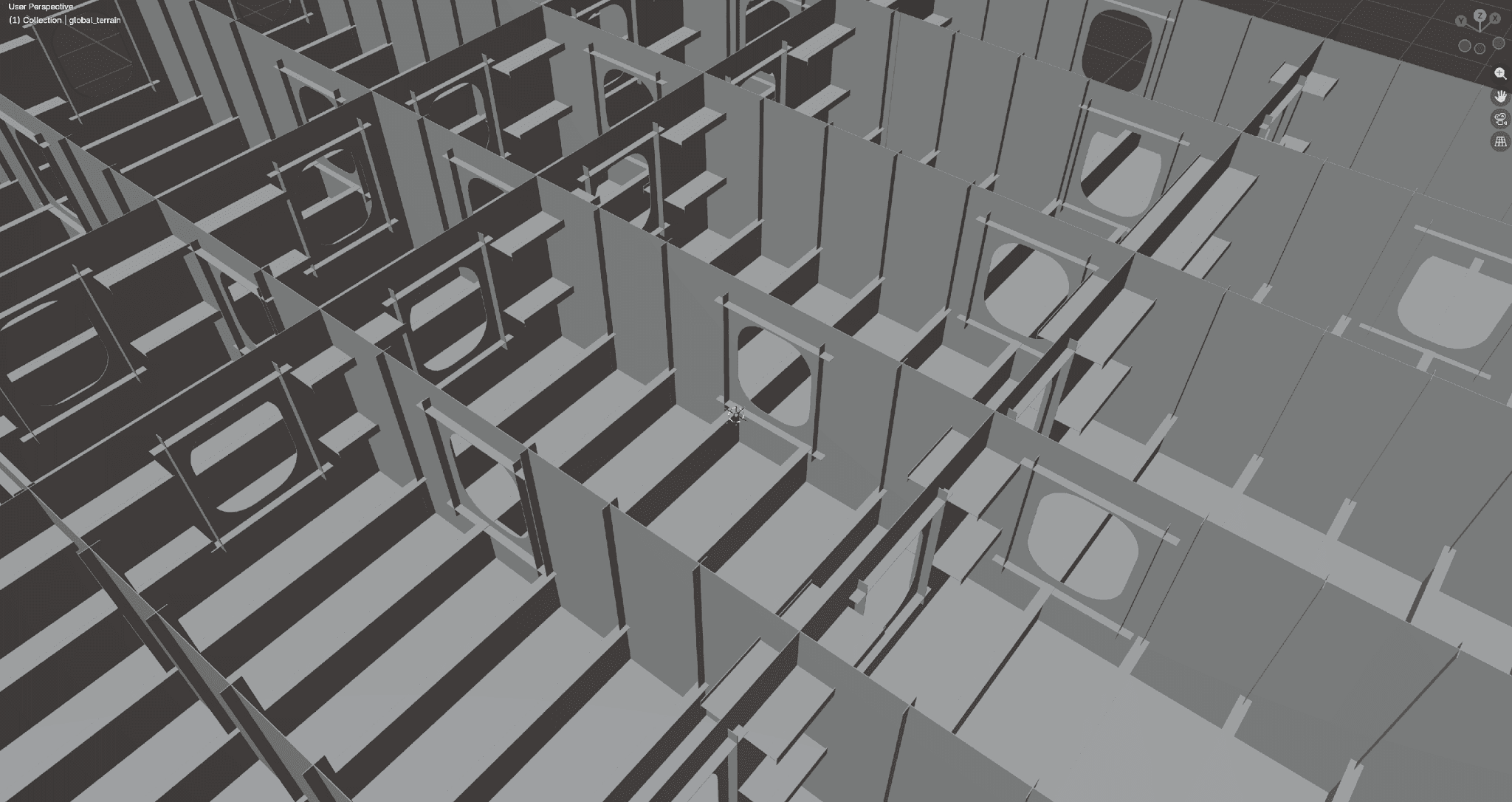

We started by analyzing the design rules of shipyard block structures — the spacing of bulkheads, the height of stiffeners, the placement of access holes. These repeating patterns were extracted and translated into an automated terrain generation system. Parameters are randomized with each execution, producing hundreds of unique environments in a single run.

Bird’s-eye view of an auto-generated virtual environment. Shipyard block structures with bulkheads and stiffeners are reproduced in code

Close-up of the same environment. Structures of varying height and spacing are placed automatically based on parameters

The goal is not to create one fixed environment, but to let the robot experience in advance the diverse structural variations it might encounter in the field. This procedural generation approach is particularly effective for industrial environments with repetitive, regular structures like bulkheads and stiffeners. The higher the structural regularity, the more variations can be produced through parameter combinations alone.

Robots move through these virtual environments along diverse trajectories — following gaps between structures, crossing perpendicular to them, climbing walls. Fine-grained movement is randomized, even reproducing occlusion effects where the robot’s own legs block the camera’s view.

An AI That Infers What It Cannot See

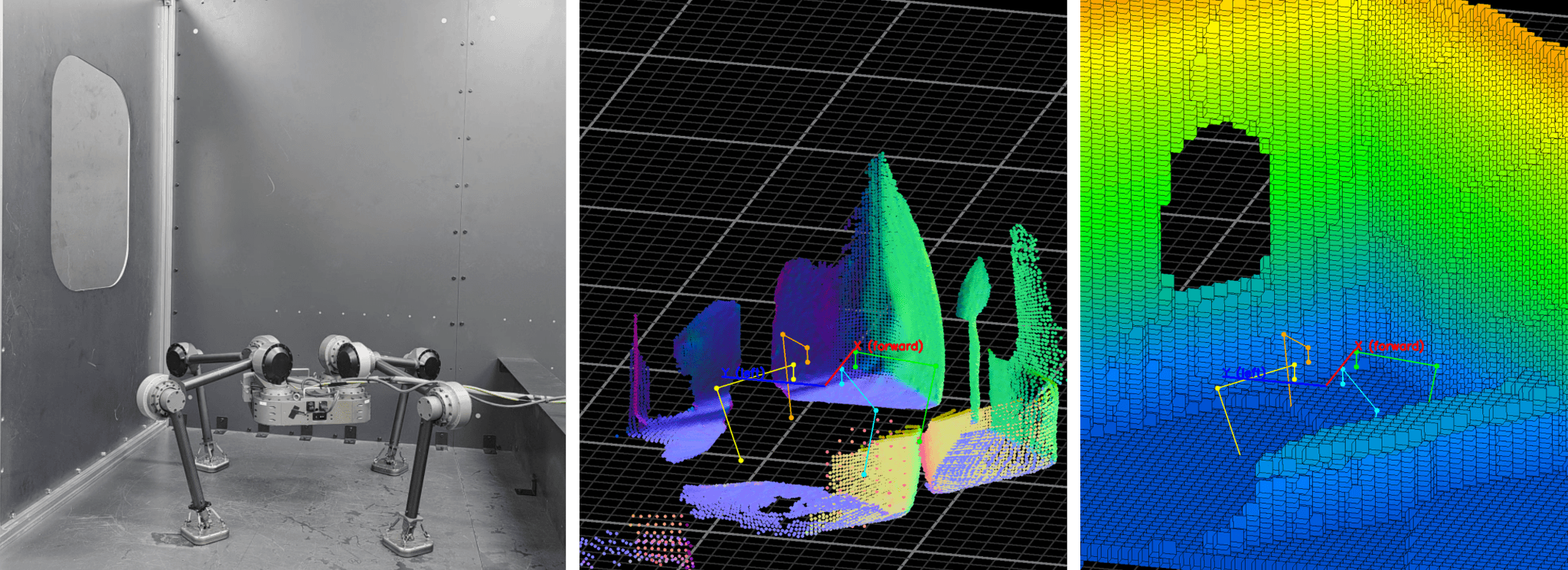

The data collected in simulation trains a Voxel Reconstruction model.

Think of sweeping a flashlight across a dark room. You see only what the beam hits, but you fill in the rest based on patterns you have already observed. This model does the same — given partial depth camera data, it infers the full 3D structure around the robot, including regions the camera cannot see.

This training is possible in simulation because the virtual environment provides both the camera input and the perfect 3D ground truth simultaneously. The ability to generate this ground truth data at scale — something impractical in real environments — is the fundamental advantage of simulation-based learning.

DIDEN Robotics has also developed proprietary techniques to improve the vision model’s performance. A visibility masking method ensures only regions the robot has actually observed are included in the training signal, teaching the model to distinguish between observable and non-observable areas.

Previously observed structures are retained in memory so that environmental information persists even after the robot moves on. Remembering what it has seen while inferring what it has not — this is what DIDEN Robotics demands of its perception model.

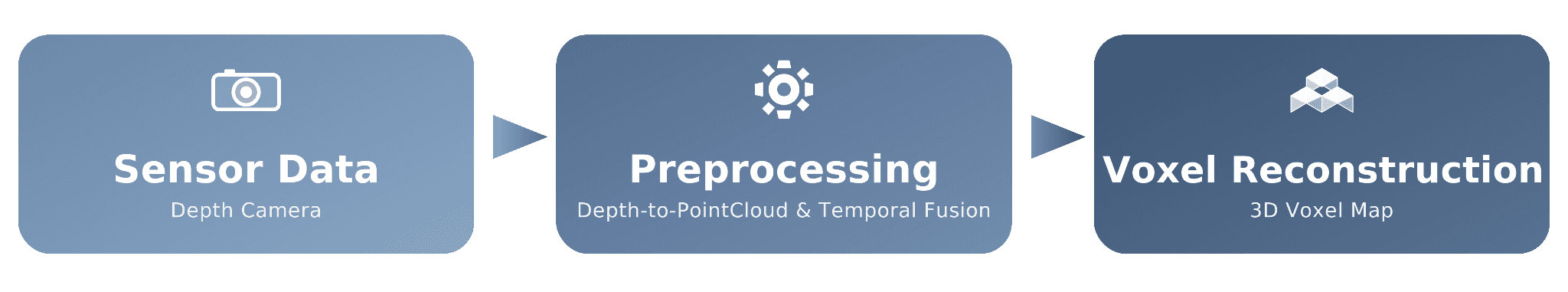

Overall architecture of the Voxel Reconstruction pipeline

Built in Simulation, Validated in Reality

The ultimate question we must answer is whether AI trained in simulation actually works in the real world.

A gap between virtual and physical still exists. DIDEN Robotics closes it through iteration. When a robot encounters a new pattern in the field, it feeds back into the environment generation system. The simulation becomes more diverse, the model is retrained, and the improved version is redeployed. Field feedback loops back into simulation; simulation results go back to the field.

DIDEN Robotics’ unique experience of repeating this cycle against real shipyard environments is progressively bringing the simulation closer to reality. The team is currently preparing to test this perception AI for autonomous navigation and task execution in real-world environments.

3D voxel map reconstructed by the Voxel Reconstruction model (left: real environment, center: depth sensor input, right: model reconstruction)

What Comes After ‘Eyes’

Perception is just the beginning. After recognizing the environment, the robot must accurately estimate its own state, then execute physical movement based on that information. The next installments in this series will cover each in turn.

This perception pipeline is being applied not only to quadruped robots but also to the bipedal robot platform currently in development at DIDEN Robotics. By adjusting the parameters of the environment generation system, training data for different structural environments can be produced.

From hardware design to simulation environment construction, training framework, and perception AI training — developing the full pipeline in-house is how Physical AI company DIDEN Robotics builds a robot’s eyes.

South Korea

©Copyright DIDEN Robotics. All Right Reserved

Terms of Service

|

Privacy Policy

|

Legal Notice

|

Prohibition of Unauthorized Email Collection

Diden Robotics Co., Ltd.

|

Representative: Junny(Joon-Ha) Kim

|

Contact: diden@didenrobotics.com / Phone: 02-6959-0642 / Fax: 02-6959-0643

|

49 Achasan-ro 17-gil, Seongdong-gu, Seoul, Rooms 401, 402, 409, 410 (04799)

|

Business Registration Number: 867-87-03056

DIDEN ROBOTICS